Automated, Reproducible and Secure Development & CI environments: Package Management (1/3)

Setting-up local development environment is a pain. Make it double with matching CI configuration. Packages and tools to install, local configurations, secrets, deployment... Developers often loose hours - if not days - before being able to run a simple make build or deploy a local instance.

This series of article will guide you through patterns and solutions to fully automate your project's development environments and ensure reproducibility both locally and on CI. Today's post is the first part about package management. Next articles will explore secret management and local deployment / dynamic environments. Subscribe to get notified !

TL; DR:

- Nix is probably one of the most powerful solution as of today, though a bit complex to learn and setup

- Containers (e.g Docker & Podman) provide a proper solution with small cost and learning curve

- Application package managers by themselves are tricky to setup reproducibly across a team, thus requiring one of the above !

- Skip right to examples setup with Nix and Containers (Docker/Podman) !

Development environment: a definition

You know what's a development environment, right? Let's have a more formal definition nonetheless, as it's not that trivial a question.

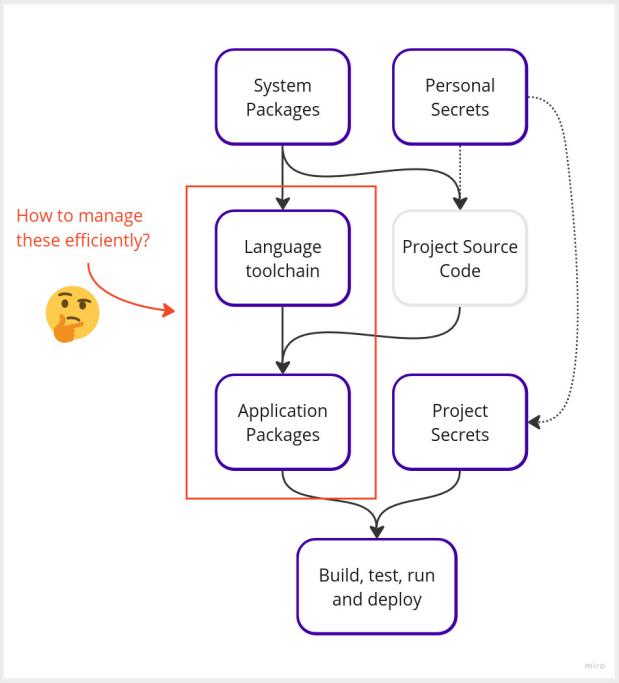

As a developer working on your project, your development environment would consist of:

- OS and system packages (Shell/Bash/Zsh, Git, ssh, etc.)

- Language toolchain(s): e.g NodeJS and

npm, Python withpip, Rust toolchain, etc.- We could consider them as System Packages, but they are better represented in a category of their own

- Your personal secrets, e.g. your own SSH key, GPG key or AWS credentials

- One or more project source code, versioned and fetched via Git clone or such

- Application packages installed through your language package manager like

npmorpip - Some project-specific secrets your team has access to: database password, temporary credentials for deployment, etc.

- Finally, all of the above will allow you to build, test, run and deploy your application !

Managing all these packages, configurations and secrets is hard. It's even harder to get everyone one the same page ! And how about reproducing the same on CI? Let's see how to automate, reproduce and secure above setup, starting with package management.

You known the pain, don't you?

Setting-up all the tools, binaries, packages, libraries and so on to be able to start coding or just run a local deployment of an application is a pain. I lived this situation again and again on every single project I worked on. Despite efforts to use Docker or various tooling, the sheer amount of manual config (and hair loss) required to just start working on a project always gave me nightmares. And of course everyone ended-up with slightly different version of something, causing ad vitam eternam It works on my machine.

Things are getting even funnier when you throw CI and secret management in the game. Raise your hand if at least 2 of the following are true in your projects, teams or organization:

- Onboarding a new team member demands at least 4h of "initial setup" before being able to code or run the most simple "it works" command

- You had to setup a bunch of secrets manually, copy/pasting them directly from Hashicorp Vault or CI server into a

.gitignored files/directories - The "It works on my machine" situation is a reality happening a bit too often

- CI configuration is Voodoo magic only Dave can manipulate safely

- When a bug occurs on CI, you pray to be able to reproduce locally. As often you can't, you end-up pushing and waiting for 15+ min for CI to reach problematic test and hope the comma you added fixed it.

- Most of your secrets remain permanently in clear on your machine, exported as environment variable in

.bashrcor stored in files under your home directory. - Developers pass the database password around them as following process to get access "by the book" is endlessly long or downright impossible (taking care never to let the security guy know).

Let's explore ways to fully automate and ensure reproducibility of your development environments so that the same workflow can be used everywhere, CI included. Ideally, we want our setup to respect the following:

- A single action (a command to run, a button to click) drops us into a fully ready development environment

- Reproducibility. Every developer and our CI must have the same version of each packages and testing deployment to avoid drift.

- Minimal one-time setup. You'll obviously need some manual one-time config for your personal secrets,

git cloneyour project and such. Let's keep that to a minimum.

Keep non-secret configuration and files in version control (e.g Git)

Stating the obvious for some here, but I often see projects relying on manual setup which could be easily versioned in .env or such and automated. As long as you don't commit sensitive data, everything else required to setup your development workflow should be versioned and automated. Linter config? Version of NodeJS to use? Non-secret configurations and environment variables? Local deployment script? In version control !

Secrets are another story of course. Don't version them unencrypted. You should rely on a secret manager to help provide secrets securely - we'll explore this aspect in the next part of this series.

Automated and reproducible Package management

Packages are various tools, binaries, libraries, etc. you'll need in order to build, test, run and deploy your project. Welcome to the fabulous world of Package Managers ! Apart from choosing the right package manager(s), we'll also have to decide how to orchestrate them in our reproducible workflow to ensure they work both locally and on CI.

Let's assume we already have a minimal setup done: we installed our OS with basic tooling like Git, Bash and Docker and cloned our project source code locally¹. Now we want to run a single command or push a single button to get a fully ready development environment.

We're gonna achieve that by describing the packages we want and having a tool install them all for us !

Universal package manager: Nix and Flakes

Nix is a universal package manager and system configuration tool. Nix Flakes can be used to run development shells (among lots of great things like create container images or install full-blown OS with NixOS)²

Nix Flakes uses flake.nix file to describe your environment and packages, for example:

{

description = "NodeJS app with Terraform and MySQL";

outputs = { self, nixpkgs }:

let

pkgs = nixpkgs.legacyPackages.x86_64-linux;

in {

devShells.x86_64-linux.default = pkgs.mkShell {

packages = [

# Here are your packages !

pkgs.nodejs_20

pkgs.nodePackages.npm

pkgs.terraform

pkgs.mysql80

pkgs.awscli2

];

# You can run commands when your shell starts

shellHook = ''

echo "Welcome to Nix dev shell"

echo "Installing dependencies..."

npm install

echo "All ready !"

'';

};

};

}Local usage

Running nix develop drops us in a readily available shell with all binaries installed

nix develop

# Welcome to Nix dev shell

# ...

# All ready !

$ node --version

v20.5.1

$ terraform --version

Terraform v1.5.5We can now build, run and test our application with all available binaries 🪄

Wait, didn't we fix version somehow? Nix also generates a flake.lock we can version along with flake.nix, thus ensuring reproducibility: next time we or anyone else runs nix develop, they'll have exactly the same packages installed. Even better, under a Nix dev shell you still have access to your own filesystem (unlike containers) so your ~/.ssh, ~/.config and other local files remain available without further config!

CI usage

nix develop can also be used on CI. For example, using GitLab CI .gitlab-ci.yml:

job:

image: nixos/nix:2.15.2

script:

# Run command under nix development shell

- nix develop -c make buildCache management may be a bit tricky though. Tools like Cachix, persisting /nix/store between CI jobs or building a container image based on Nix derivation will probably be necessary for efficient CI usage.

Nix: conclusion

Nix requires a bit of initial setup and learning curve is steep to say the least, but it's definitely one of the most powerful tool available today. We'll explore Nix usage on advanced CI setup in a later blog post 😉

See examples below to try it out yourself !

Some links to get further with Nix:

- devenv - Setup development environment with Nix

- Nix pills - Series of tutorials introducing to Nix

- Nix first steps - Another series of tutorials to get started with Nix

Containers: Docker, Podman and alike

Using containers is a good compromise between complexity and learning curve, but somewhat limited compared to Nix and comes with their own set of complexity (mounting volumes, managing permissions, sharing images...). However it's very easy to setup on CI as most CI systems are using containers natively.

We'll just need to build a container image and run a shell within for our environment to be ready. For example using Docker or Podman with a Dockerfile/ Containerfile such as:

# Reference images for multi-stage build

FROM hashicorp/terraform:1.5.5 AS terraform

FROM amazon/aws-cli:2.13.9 AS aws

# NodeJS base image

FROM node:20.5.1-bookworm

# Terraform

COPY --from=terraform /bin/terraform /bin/terraform

# AWS

COPY --from=aws /usr/local/aws-cli/ /usr/local/aws-cli/

COPY --from=aws /usr/local/bin/ /usr/local/bin/

# MySQL

RUN apt update && apt install -y default-mysql-clientAnd a docker-compose.yml file with bind mounts and a few configs:

services:

dev-container:

image: registry.mycompany.org/myapp:1.0

build:

context: .

command: ["bash"]

volumes:

# Mount local AWS credentials

- ${HOME}/.aws/config:/root/.aws/config:ro

- ${HOME}/.aws/credentials:/root/.aws/credentials:ro

# Mount project directory

- ${PWD}:/app

# Keep container home as volume to keep history and such

- gitops-home:/root

# Shell will drop us in this directory

working_dir: /app

volumes:

dev-container-home:Now remain to build publish our image with docker compose build | push for anyone to use and we're done. You can even run dev containers in Kubernetes or in a Cloud environment via a platform.

Using containers may have some limitations and complexities though:

- Container images are reproducible only if you use the exact same image each time. However, building a container image is not reproducible. You'll want to publish image to be re-used - avoid building a new image each time you run a development shell!

- Containers are isolated from your OS by default. It might cause setup complexity.

- You'll have to mount volumes to get access to project source code and personal secrets, with the caveat that permissions inside containers may be different than host.

- Podman is better than Docker in this situation: permission in Podman containers will be the same as user running the container, avoiding some tricky permission issues

- You'll probably need to export ports to run your application locally in container

- It's likely your IDE will run outside of container, or you'll need to access IDE via web browser.

See below for some concrete usage examples.

Local usage

Running

# Docker

docker compose run dev-container

# Podman

podman-compose run dev-containerdrops us in our fully-ready development shell. We can now build, run and test our application with all our packages available. 🥳

CI usage

Using container on CI is usually easy as most CI providers work with containers. We just have to use the same image locally and on CI to reproduce the same workflow ! Practical, isn't it?

Containers: conclusion

Containers are easy to run and deploy, but come with some limitations. You'll need to ensure built images are shared with your team, which may require additional setup.

Application Package Managers

Your language ecosystem probably comes with a package manager: NodeJS has npm, Python has pip, Java has Maven, Ruby has Gems, and so on. They provide a certain amount of automation and reproducibility but they're far from perfect:

- They only provide package management for their own ecosystem. You won't be able to manage system packages and it's likely your project relies on tools outside of their scope, risking consequent drift.

- The same language or interpreter version must be used everywhere, which is no easy feat. Language interpreters and toolchains are usually installed via system package manager such as

aptorapkand it's likely everyone has their own version - unless you use a version manager as described below.

It's frequent to couple application package managers with other solutions like Docker or Nix to ensure better reproducibility. Application package manager by themselves probably won't be able to provide a sufficient layer of automation and reproducibility, leaving you with a consequent amount of manual setup and drift between developers and CI environment.

Nonetheless, make sure to follow these best practices with application managers:

- Always pin dependency version (ensure a fixed version is installed). It may be achieved natively using

*.lockfiles (e.g. Javascript andpackage.lock) or specifying a fixed version in dependency file, e.g.pip'srequirements.txtlikedocopt == 0.6.1 - Make sure everyone is using the same version of package manager and related language or interpreter. You don't want some developers using Node 20.x while others have 18.x. Ecosystems usually provide tools to switch or specify versions such as

nvmfor Node orrustupfor Rust. See Awesome Version Managers for an overview of possibilities. - Regularly update your dependencies to fix CVEs and keep the pace. Tools like Renovate can help with that.

See examples below for various real use case !

Local usage

Local usage for application package managers is usually straightforward. A single command (e.g. npm install or pip install) install all dependencies, and you can start coding right away.

CI usage

Package manager are easy to use on CI, but cache management will probably require a bit of work. Luckily, plenty of resources and tools exists to efficiently manage cache for most common application package managers.

We won't be able to go in-depth on this subject as each package manager may require its own CI setup and cache management, but feel free to ask in comment I'll try to provide directions.

Application package managers: conclusion

Application package managers are the basics of development environment setup, but far from enough to ensure proper automation and reproducibility. You'll probably need additional solutions like Nix or containers to fully automate your workflow.

Cloud Development Environments

Platforms are flourishing to answer the need for development environments. They usually provide Cloud-based environments running in containers - among various other features and integrations. If you don't fear vendor lock-in and sparing a bit of money, they're worth taking a look into. A few well known platforms:

Searching for "Cloud development environment" will likely yield tons of result, pick your favorite !

What shall I choose for my project? Comparison table

This is a hard question. Here's a comparison table of benefits, complexity and cost for each solution:

| Package manager | Automation | Reproducibility | Ease of use | Cost |

|---|---|---|---|---|

| Nix Flakes | ⭐⭐⭐ | ⭐⭐⭐ | ⭐ | Free |

| Containers (Docker, Podman...) | ⭐⭐ | ⭐⭐ | ⭐⭐ | Free |

| Application package manager | ⭐⭐⭐ | ⭐ | ⭐⭐⭐ | Free |

| Cloud development environment platforms | ⭐⭐ | ⭐⭐ | ⭐⭐ | Paid |

- Nix:

- Pros: very powerful, best reproducibility

- Cons: hard to learn (but definitely worth it)

- Containers (Podman, Docker...)

- Pros: easy to learn and setup, natively integrated in most CI tools

- Cons: some limitations and complexities (isolation, permissions, volume mounts...), reproducibility not guaranteed

- Application package managers

- Con: not reproducible, limited to their own ecosystem.=

- Pros: easy to use and setup

- Cloud development environment service:

- Pros: easy to use, lots of integrations

- Cons: additional setup, vendor lock-in, costly

Wait, and secret management ?

Package management is a long subject already and secret management is another complex subject altogether. We'll explore it in the next part of this series. Subscribe to keep in touch !

Full examples

Below are full example you can try out yourself. Git clone the repository, run command and see for yourself !

Nix Flakes

Nix flake allow you to describe your environment using flake.nix. It only requires Nix to be installed, and it does the rest !

- Clone DevOps Example repository:

git clone git@github.com:PierreBeucher/devops-examples.git cd devops-examples/automated-reproducible-dev-environment - Run a nix shell:

nix develop - Play with application:

npm run build npm run start # Open web browser at localhost:3000 npm run test

See README for details.

Container: Docker and Podman

Container (e.g Docker) images can provide a development environment by running a shell in container directly. You just need to build an image and share it with your team to have everyone on the same page.

- Clone DevOps Example repository:

git clone git@github.com:PierreBeucher/devops-examples.git cd devops-examples/automated-reproducible-dev-environment -

Build image and run shell in container

# Docker docker compose build docker compose -f docker-compose.dev-container.yml run dev-container # Podman podman-compose -f docker-compose.dev-container.yml build podman-compose -f docker-compose.dev-container.yml run dev-container - Play with application:

npm run build npm run start # Open web browser at localhost:3000 npm run test

See README for details.

What's next

You're now able to fully automate and reproduce your development environment locally and on CI ! Your development workflow will gain in efficiency and stability, congratulations.

Next time we'll talk about secret management - how to load secrets and credentials efficiently and securely in our environments, as packages are a thing but they're usually go in pair with secrets in your workflow - and how to implement efficient local deployment and dynamic environments. Don't forget to subscribe to get notifified !

¹ OS installation and setup can be automated as well, but we're not gonna focus on this aspect for now. OS-level config is usually left to individual preferences, what we really want is to ensure everyone has the same development environment regardless of their base OS config, but it is possible to automate OS config as well with tools like NixOS or Ansible.

² Nix is awesome. Really.

Great post! One question – don’t you need to pre-setup Nix in order for flake-related stuff to work (and also `”nix-command”`, for `nix develop`)? AFAIK they’re still marked as experimental

Thanks ! Indeed, you have to install Nix first (see here: https://nixos.org/download/) and Flakes have been “experimental” for quite some time despite being stable enough (community is kinda divided on the subject). I didn’t go into too much details as goal is to show effective usage without going into not-so interesting details (installation is described well enough on their docs)

Also you might want to look at Flox for a similar, easier to use tool. I wrote a few posts about it:

– https://blog.crafteo.io/2024/10/25/flox-better-alternative-to-dev-containers/

– https://blog.crafteo.io/2025/01/10/flox-novops-development-packages-secrets-and-multi-environment-management-all-in-one/